Intro

Throughout every project, designers make countless decisions. These include fonts, microcopy, user flows, and product structure. The list goes on and on. The tricky thing though is figuring out whether these design choices were optimal or at least good. This brings us to this article’s topic: how do you know if your design rocks.

A few disclaimers before we jump in:

This list of design validation methods presented in this article is by no means comprehensive. There are books upon books on design validation. And rightfully so, might we add.

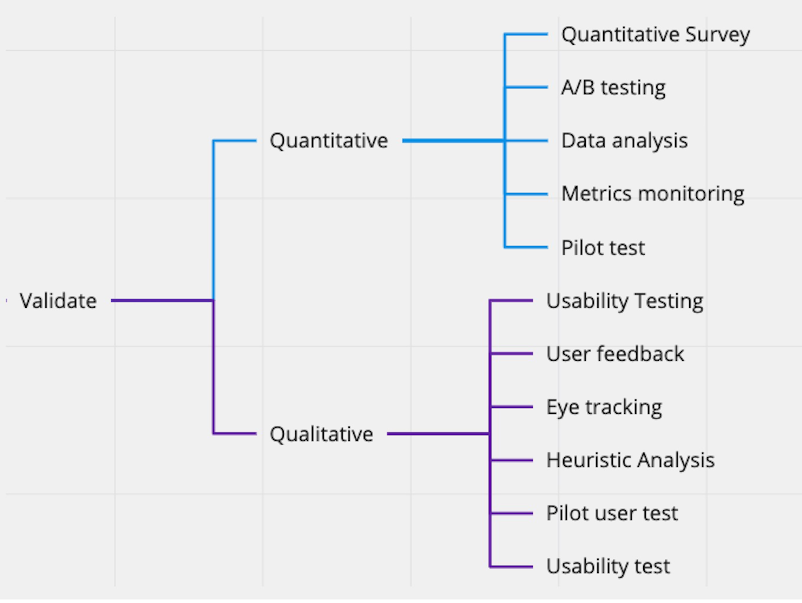

Here’s a good overview of the design validation methods. Feel free to check out the whole UX testing process here.

The ways to test your design presented in this article are the ones we employ the most often. Therefore, we deem them the most practical and share-worthy.

N.B. Also, please note that these methods work in conjunction. You can’t just pick one and rely solely on its results; use as many as possible.

Design Validation through Usability Testing

Let’s kick off this list with the trusty old favorite - usability testing. This method is based on the axiom that design without users is not design, i.e. every major design decision should be validated by the means of testing it on actual users. Only then should it be considered validated.

Usability testing is probably the most common and arguably the most potent design validation method. There are a few reasons for that. Firstly, this design validation method involves interviewing actual users or potential users, which is the most reliable source of information. Secondly, usability testing usually involves creating interview scripts that allow you to compare answers to the same sets of questions. The downside though is that usability testing is time-consuming and relatively not cheap.

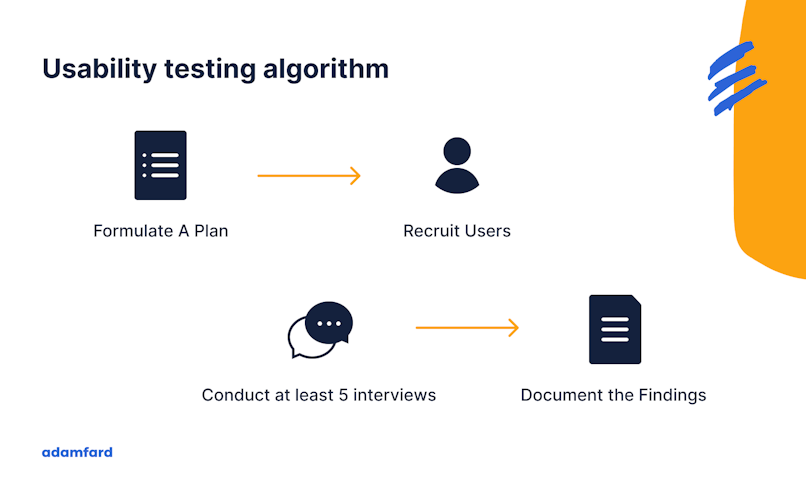

Usability testing generally involves the following processes:

Defining objectives;

Looking for users. Consider using the following resources:

Contact your existing users;

See if you can find your competitors’ (or potential) users. Here are a few places to look:

Facebook groups;

LinkedIn groups;

Try usertesting.com;

Reddit.

Creating task scenarios and conducting the interview;

Analyzing the data and turning it into insights.

Another important caveat is that you'll need 5 users per feature or a group of features. According to research conducted by Nielsen Norman Group, 5 is the optimal number of users to test your design on. The reason it's optimal is because 6 is the point of diminishing returns, while 4 and fewer users will not give you as many insights as you need.

Source: https://www.nngroup.com/articles/why-you-only-need-to-test-with-5-users/

Hallway Testing & Peer review

We designers love to judge someone else’s design. That’s why once in doubt, have your designer friend or a colleague take a look at your work. That’s an invaluable source of new ideas and feedback.

Hallway testing is pretty much yanking anyone you can lay your eyes on and having them interact with your design to gather feedback. Ideally, that “anyone” should be a potential user of the product you’re testing so that Lucy from accounting doesn’t review an app for ER nurses.

Think of both of these as quick-and-dirty usability testing. Did we mention that both of these methods are free?

10 Nielsen Heuristics

Analyzing and validating a product design through the lens of design heuristics is quite common. It often comes naturally from experienced designers. Experienced or not, though, a designer should have these heuristics top of mind. To help you do that we’ve prepared a checklist and a template for Nielsen Heuristic Analysis. Ideally, you’d need to print these templates and have an analysis session on your app.

We also have a comprehensive video that covers all aspects of heuristic evaluation. Consider taking a look at it if you prefer watching over reading.

The 10 Nielsen’s Heuristics include:

Visibility of System Status (users should know the status of the system and get feedback on interactions with it);

Match between the system and the real world (the system should resemble the experiences that users already had);

User control and freedom (users should be able to reverse their action if done by mistake);

Consistency and standard (similar system elements should look similar);

Error prevention (minimize the likelihood of making mistakes);

Recognition rather than recall (users should be able to interact with the system without prior information or context;

Flexibility and efficiency of use (both new and experienced users should be able to efficiently use the system);

An aesthetic and minimalist design (declutter as much as possible, less is more);

Help users recognize, diagnose, and recover from errors (make error messages understandable, and suggest ways to fix an error);

Help and documentation (if a user has a hard time interacting with your app, make sure there’s help that’s easily accessible).

These heuristics serve as principles on which your design should be based. Done correctly, these are a recipe for impeccable and validated design.

Design Validation through Analytics & Benchmarking

Metrics

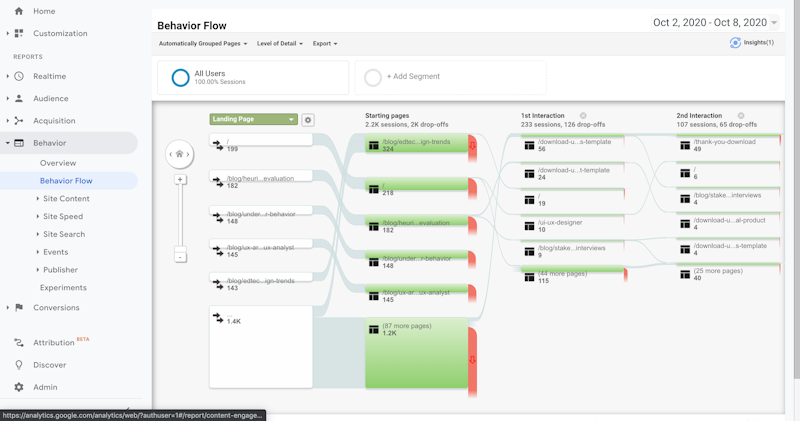

The design drives business. Therefore, good design should define key product metrics. That means that gauging metrics about design changes is how you can estimate if you made a good design decision. However, there’s a downside to using this retrospective method. The data takes time to collect. Additionally, you’d have to make as few changes as possible to estimate each decision separately, or else you won’t know what works and what doesn’t.

The screenshot is taken from Google Analytics

Benchmarking

Benchmarking partially helps overcome the retrospective analysis’ downsides we’ve mentioned. Benchmarks are the industry standards for your product metrics. If the metrics are on par with the industry average, so is your design.

Heatmaps

Heatmaps are visual representations of how users interact with your product. Take a look at the heatmap below. The redder the area is, the more users click on it. Conversely, the bluer it is, the fewer clicks it gets.

Additionally, there are plenty of plugins for Figma, Sketch, or other UX tools that, with the help of AI, predict how users’ attention distributes across the design elements. Luckily, this use case is free and doesn’t take much time. This distribution should ideally coincide with the information architecture and hierarchy you’re going for. If it doesn’t, you know what to focus on. Take a look at the gif below to get a better idea of what we’re talking about.

A/B Testing

In a nutshell, A/B testing is creating multiple versions of the same piece of functionality and then seeing whichever one performs best. It’s a potent method but takes time for the data to aggregate and needs developers to help you implement the A/B testing.

This method is more about finding out which design version is better. That means that you need at least two versions of the same design. Also note that you need to make as few changes as possible in each version, ideally one tweak per version.

Design Validation Through Stakeholder Interviews

So far, we've been talking about validating designs from the perspective of the users. However, there's another layer to validation that isn't as talked about: stakeholder interviews. In a nutshell, stakeholders are all people who have an impact on the product you're designing, i.e. marketers, executives, salespeople, developers, governments, etc., etc. The kind of stakeholders you'll be dealing with will vary depending on the product.

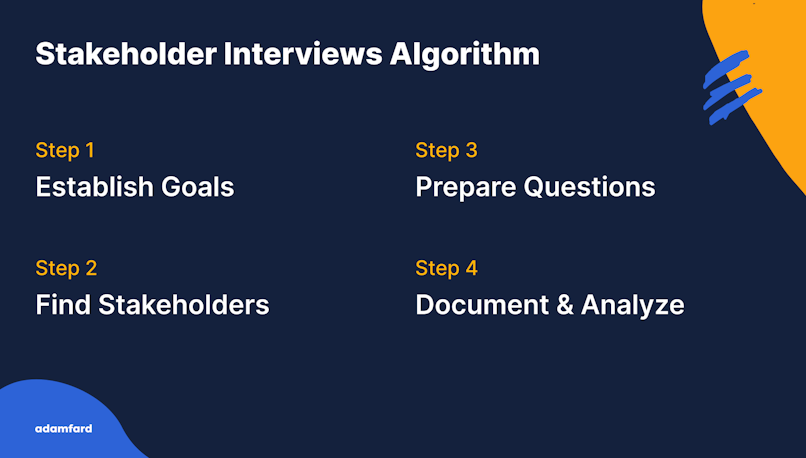

That said, stakeholders are going to be the ones who give the ultimate approval, not users. As such, to save yourself the headache of redoing and burning deadlines, you'll need to consult quite a few people. Long story short, the stakeholder interview algorithm looks as follows:

If you wanna learn more, we have a full article on stakeholder interviews that also features a template you could use.

Outro

Validating your design is not an easy task. However, we hope that it’s now a tad easier for you. Make sure to combine users’ feedback and user-generated analytics and you should nail your design.

Design Validation Checklist

Download our checklist on design validation to make sure your decisions are well-informed and have research to back them up:

Download Free ChecklistFAQ

What is design validation and verification?

Design validation is gauging the performance of a design before implementing it.

How do you validate product design?

In a nutshell, you have your users interact with a piece of design and gauge its performance.

How do you validate usability of design?

Usability testing is the best way to test usability. Usability testing usually entails having interviews with at least 5 users to measure design's performance.