“I am constantly amazed by man’s inhumanity to man.”

Primo Levi, If this is a Man, 1947.

We’re at the moment in history when digital products have become nearly inseparable from our lives. The experiences we design have a real and tangible effect on the people we create them for.

Design ethics isn’t really a new thing—we’ve been having this conversation way before the digital era, but it’s fair to say that it has never been as important as it is today.

As designers, we should make a continuous effort to make our products more ethical, thoughtful, and safe for people—in this article, we’d like to explore some fundamental ideas of ethical design that all UX professionals should keep in mind.

Let’s dive right in, shall we?

Pillars of ethical software design

To many, ethics, in general, seems to be a very wishy-washy topic because it lacks a quantitative perspective. We can’t calculate how ethical a product is; therefore, there’s no objective way of assessing its ethical implications, which is, of course, false.

While moral philosophy is an extremely broad field, there are fairly simple frameworks that can help us establish whether a digital product or experience aims to satisfy user needs without harming them.

One good example is the "Ethical Hierarchy of Needs” pyramid developed by Aral Balkan and Laura Kalbag.

It’s a graphic illustration of the fundamental ethical design pillars and how and how each of them depends on each other to make sure that an experience is ethical in nature. Fundamentally, an experience must first and foremost abide by a human’s inherent rights—whenever a design infringes on them, it immediately becomes disqualified for being considered as morally sound.

Once, we’ve established that it respects human rights, we need to understand whether it’s designed functionally. Does the product provide its users with a convenient and reliable medium to perform tasks. And last, but by not means least, a design should aim to be delightful, which is impossible if the previous step has been disregarded.

Now, let’s try to take a more detailed look at the things you can do to create morally-sound and ethical designs.

Ethics should be part of your DNA

Basically speaking, unethical and deceptive design is created by people that make unethical design decisions. In turn, unethical design decisions are more likely to be made in companies that don’t value ethics.

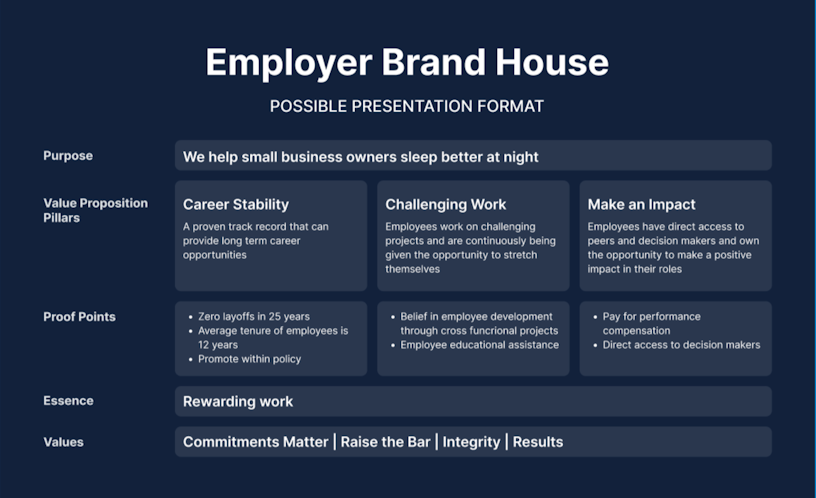

Below, we’ve schematized how core company values propagate into tactical design decisions. We’re confident that one of the most confident ways of eliminating morally questionable design is by addressing the problems that lie in the company’s values and culture.

It’s also worth mentioning that organizational culture and values have a tendency to be documented and then become forgotten or disregarded. A company’s goal is not only to develop a culture but also to make a conscious effort to embody and reiterate it.

When it comes to creating values, here is the exercise that we use. The brand house structure helps you answer key questions that make up your company’s DNA.

Accessibility

Another vital element of ethical design is accessibility, which revolves around making sure that all people can use your product or service with no regard to any possible impairments or disabilities they may be suffering from.

We need to take into account that about 15% of the world’s population suffers from a wide array of impairments. It’s fair to assume that this percentage roughly translates to your user base as well. Making sure that they can comfortably use your product has both pragmatic and ethical value.

Despite these numbers, few organizations and their products and websites really care. For instance, a study conducted by WebAIM suggests that most websites do not offer a fully accessible experience. They found that less than two percent of the world’s top million websites are offering accessible content to those with disabilities.

To help you better empathize with the impaired users, we’ve developed a prototype that emulates how people with various disabilities and impairments experience design.

In practical terms, ensuring good accessibility entails things like contrast, colors, typography, media, etc. To help you navigate the complexities of software accessibility, we’ve prepared an ultimate checklist 👇

The Ultimate Accessibility Checklist

Get a free copy of our accessibility checklist that features best practices, activities and tools to help ensure your product is accessible.

DownloadInclusivity and equality

At first glance, the field of product design may not seem like something that could lack inclusivity. You’re designing for anyone who would use your product, right? Well, yes, and no—there’s a little more nuance.

All people have implicit biases against others—there’s no way around it. Feel free to find your own.

Given that we are the ones designing and programming technology, it will always make its way into the products we create.

For instance, in 2017, we saw a report suggesting that one of the machine learning algorithm-based software used by US courts that assessed the probability of prisoners reoffending was heavily biased against black people.

Similar issues permeated voice assistant software. It appears that the devices are better suited to recognize white Americans rather than black Americans. The devices misidentified 19% of words pronounced by white people, and 2% of the sequences were deemed unreadable. The same statistics clock in at 35% and 20% for black people.

Even the icons and avatars used throughout interfaces are often subject to bias. A study published in the Journal of Psychosocial Research on Cyberspace states that “when asked to pick a typical human, people are more likely to pick a man than a woman, a phenomenon reflecting androcentrism.

Usability

The least you could hope for when designing a product is making it usable. To help you make more sense of what usability is, here’s how Jakob Nielsen, the co-founder of Nielsen Norman Group, deconstructs the term:

Learnability—how easy is it for first-time users?

Efficiency—how quickly can users perform tasks?

Memorability—what is the experience for returning users?

Errors—how many errors do users make, and how severe are these errors?

Satisfaction—how pleasant is it to use the design?

Generally, usability is meant to be invisible. Good design is seamless, whereas bad design will immediately make itself seen and felt. Why make your users’ experience harder than it needs to be?

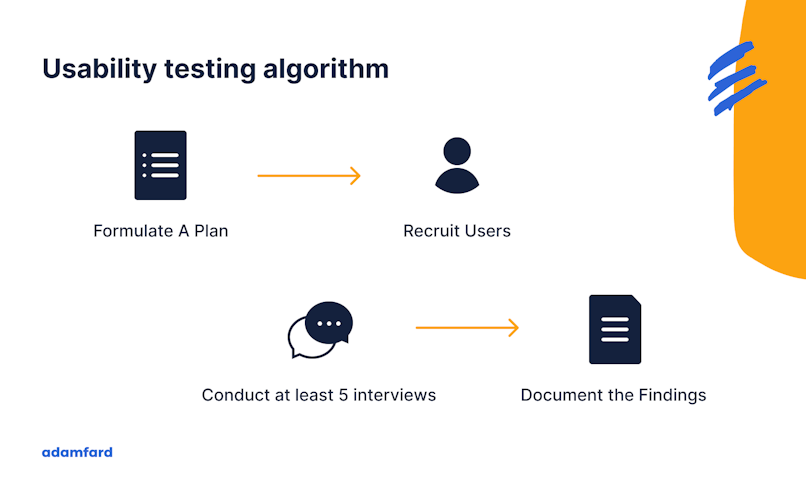

Ensuring good usability really comes down to following due process. In this context, the best way to address potential usability issues revolves around thorough user interviews and usability testing. Both of these require face-to-face interaction with users to gather first-hand accounts as to how well a piece of design addresses their needs.

Here is a simple framework we use to eliminate usability issues:

Transparency

Regardless of whether transparency is part of your core values or not, users expect it. Even though you might think that it’s implied, let’s look at the data.

The only publicly available dark pattern research was conducted on shopping websites. The said research concluded that about ~11% of their sample sites featured dark patterns.

Dark patterns are, by definition, misleading, which means that they lack transparency by definition. Dark patterns should be viewed as an antithesis of transparency.

The best way of counteracting a lack of transparency is empathy-driven decision-making. Human standards for transparency are pretty universal, so it’s quite difficult to unintentionally design something misleading. Due design process elements such as usability testing should help eliminate these issues.

Privacy and data protection

As a designer, you’re unlikely to have much impact on matters of privacy and data collection. Usually, these decisions are made by legal, marketing, and business stakeholder teams.

However, you do have an impact on how these policies get communicated.

One of the more recent data protection regulations is GDPR, which provides for:

The requirement that whenever organizations collect data from their users, they must do so in a secure manner;

Heavy fines for security and data breaches;

The permission for organizations to collect data from users after they’ve provided their consent to do so. It’s also critical that companies ask their users for consent in a clear and explicit manner. More importantly, users must be allowed to withdraw their data easily.

The “right to be forgotten,” which means that people have the right to have their data removed if they would like to;

People’s access to their information collected by companies, as well as awareness in regards to how that information is processed;

Data portability, which allows people the receive their data in one company and transfer it to another;

Heavy fines for non-compliance.

Business vs. ethics conflicts

Whatever minute benefit you gain from employing unethical tactics will be overshadowed by eroding your users’ and customers’ trust. All the press releases and apologies in the world will not bring them back once they leave.

It also should be noted that, in our estimation, some industries have turned dark patterns into best practices. In our experience, one of the fields that’s the most prone to employ dark patterns is travel & hospitality. Many low-cost airlines worldwide are prime examples of bad UX practices.

A great number of such services display prices lower than you will end up paying, elicit a sense of urgency (only 3 seats left, 17 people are booking this right now), and try to upsell in every corner.

The bottom line

Ethical design should theoretically be a bare minimum, but for some reason, it ends up being the pinnacle of good design. As UX specialists and designers, it’s our responsibility to adopt morally-sound design practices for our own good, for the people around us, and for the environment as a whole.